My daughter asked me what AI is.

Kids ask the best questions because they haven’t learned to accept vague answers yet. She wanted to know what it actually does. Not “it’s like a smart computer” — she’s heard that. What does it do?

I tried three different explanations before one landed. That process — stripping away everything until only the true thing remained — turned out to be more useful than anything I’d read. So here’s the version I’d give anyone who wants a straight answer, with no jargon and no skipping the hard parts.

What is AI?

Imagine you want to teach your dog to recognize cats. You can’t explain it in words — the dog doesn’t understand words. So instead, you show her a thousand pictures. “Cat.” “Cat.” “Not a cat.” “Cat.” After enough pictures, she starts to get it. Not because you explained the rules, but because she found the pattern herself.

That’s AI. Instead of writing rules for a computer to follow, you show it millions of examples and let it find the pattern on its own.

That’s the whole idea. Everything else is details.

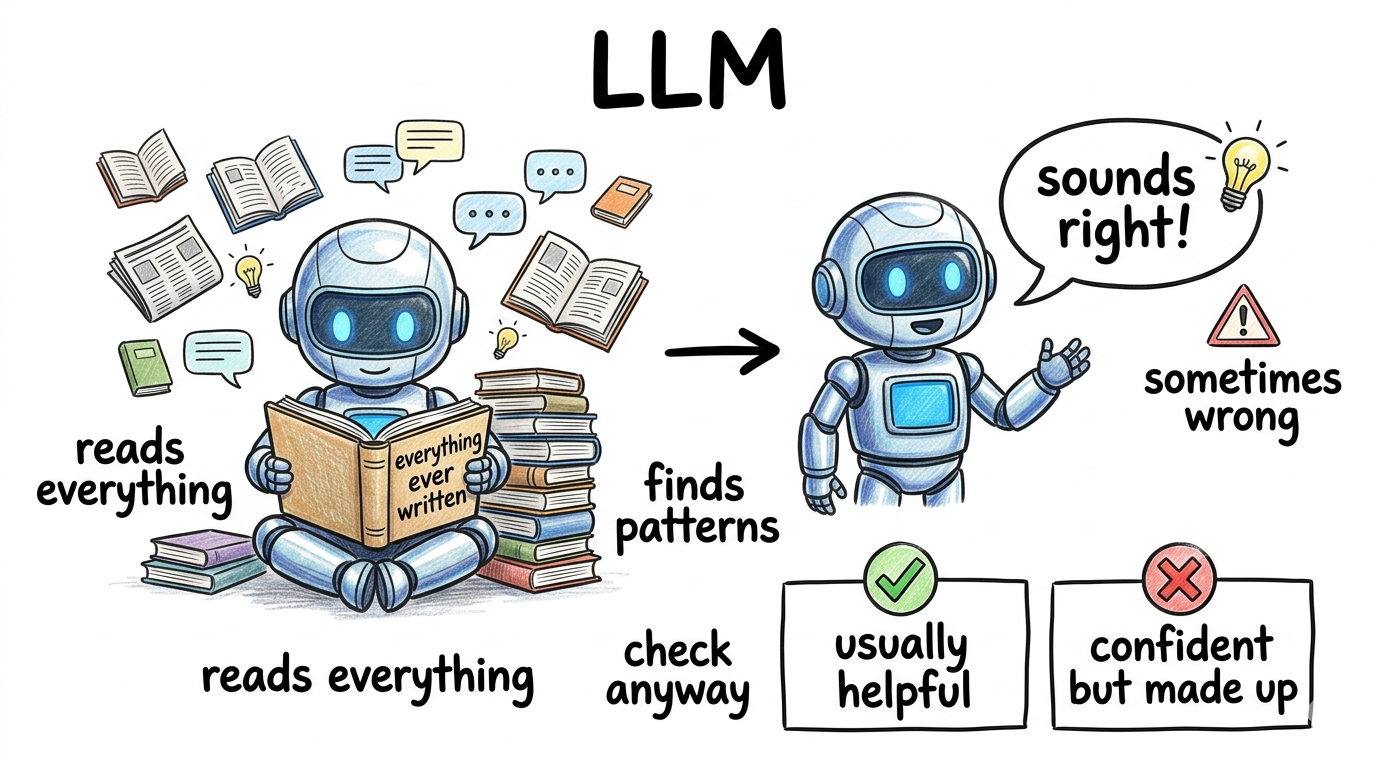

What is an LLM?

LLM stands for Large Language Model. It’s the technology behind ChatGPT, Claude, and most of the AI assistants you’ve heard about.

Think of it like a very talkative parrot.

This parrot has spent its whole life listening — to every book, every website, every conversation it could find. Billions of words. And now it’s very, very good at continuing a sentence. You say “the sky is…” and it says “blue.” You ask it a question and it gives you something that sounds like an answer — because somewhere in all that listening, it heard a similar question with a similar answer.

The key word is sounds. The parrot doesn’t understand what it’s saying. It’s pattern-matching at extraordinary scale. Most of the time that’s enough to be genuinely useful. Sometimes it isn’t — and we’ll get to that.

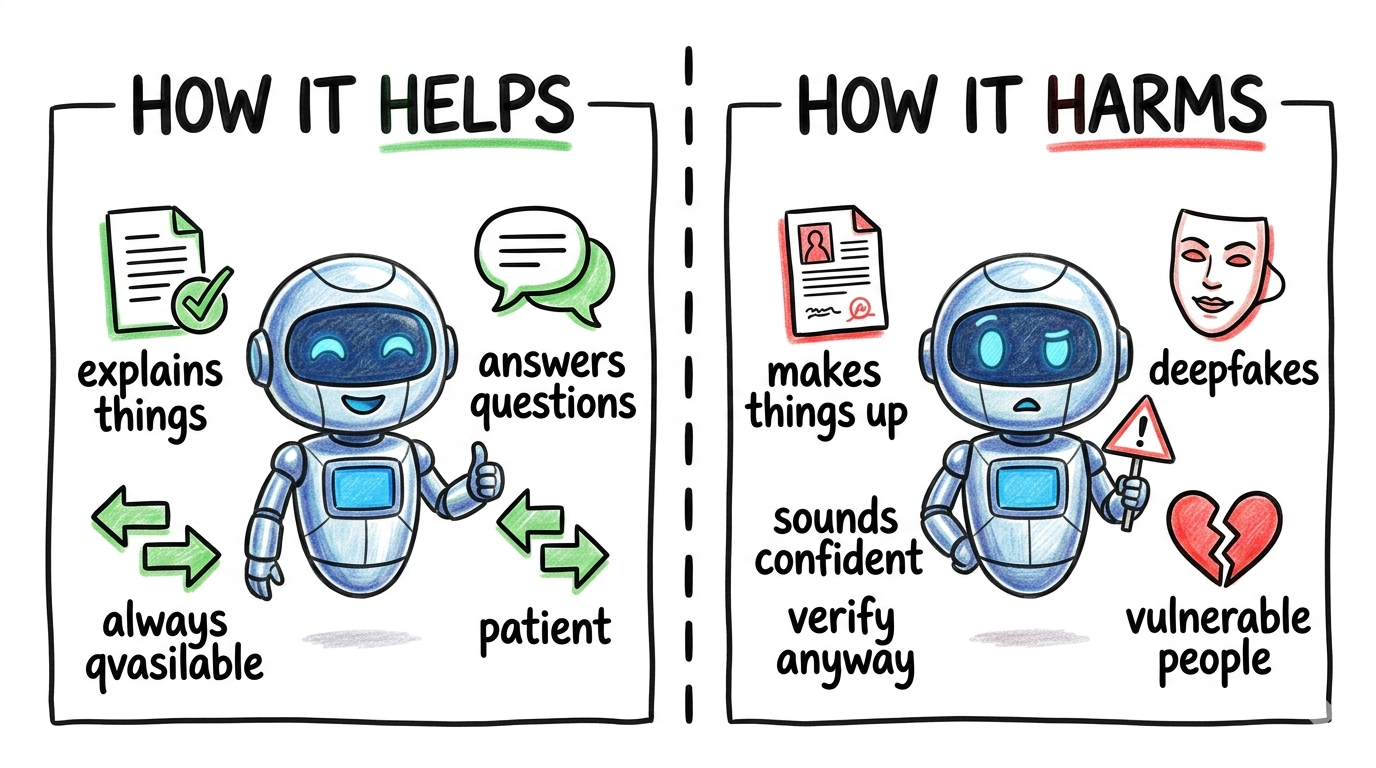

How can it help us?

The parrot has read everything. That turns out to be genuinely useful — and not just for people who work in tech.

It explains complicated things in plain language. Medical results, legal documents, tax forms — things that used to require an expensive appointment to decode. Paste the confusing text in and ask “what does this actually mean?” You still need professionals for decisions. But now you can walk into those appointments understanding what you’re talking about.

It helps with writing. Not just drafts and edits — translation too. Writing in a second language, composing a formal letter when you’re not sure of the words, making something sound professional when it doesn’t yet. These used to require someone else’s help. Now you can get a first draft at 11pm when no one is available.

It does the tedious work so you can do the interesting part. Summarizing a 90-minute meeting. Sorting through a messy spreadsheet. Writing the first draft of a report you’ll then rewrite in your own voice. Debugging a piece of code at midnight. These tasks aren’t hard — they’re slow. AI compresses them, which means you spend more of your day on the parts that actually need a human brain.

It answers questions patiently, without judgment. My daughter asks me why the sky is blue about once a week. I love her for it. But there are questions people don’t ask because they feel like they should already know the answer, or they don’t want to bother anyone, or it’s 2am. AI answers those questions, every time, without making you feel small for asking.

It’s already built into the tools you use. Your email suggests replies. Your phone edits your photos. Your search engine summarizes results before you click. AI isn’t something you have to seek out anymore — it’s woven into everyday software. Understanding what it’s doing in the background helps you decide when to trust its suggestions and when to override them.

How can it harm us?

Here’s where most explanations get vague. I’ll try to be specific.

It makes things up. Confidently. Without knowing it’s doing it.

The parrot doesn’t have an “I’m not sure” voice. When it doesn’t know something, it generates something that sounds like an answer — because that’s all it can do. It has no way to check whether what it’s saying is true.

In 2023, two lawyers submitted legal documents to a court citing six case precedents. The judges, the quotes, the dates — all made up by ChatGPT. The lawyers were sanctioned. They had trusted the output without checking. The AI had no idea the cases didn’t exist. It just produced something that sounded like a legal citation.

For a question about a recipe, this barely matters. For a question about medication, symptoms, or legal rights, it can.

The rule: AI is a brilliant starting point. It’s a terrible final source. Always verify anything important.

Scammers now have access to something very powerful.

A finance employee received a video call from her company’s CFO asking for an urgent wire transfer. She could see him on screen. Several senior colleagues were on the call too. She trusted it. She wired $25 million.

Every person on that call except her was a fake — AI-generated faces and voices, in real time.

The old rule — “if I can see them and hear them, it’s real” — no longer holds. AI can clone a voice from a short audio clip. It can generate a face that moves and speaks. The people most likely to be targeted are the people who haven’t heard this yet.

If someone contacts you urgently asking for money or information — even if it looks and sounds exactly like someone you know — call them back on a number you already have. Not the one they gave you. That one step breaks most of these attacks.

It remembers what you tell it — and you might not think about who else sees it.

People paste things into AI without a second thought — medical symptoms, salary details, company documents, private conversations. Most AI tools send that data to remote servers. Some use it to train the next version of the model. That means your private input could influence what the system says to someone else later — or end up in a dataset you never consented to.

Before you paste something sensitive, ask: would I put this on a public forum? If not, check the tool’s privacy policy — or don’t paste it.

It changes what jobs look like — and not always in the ways people expect.

AI isn’t replacing most jobs overnight. But it is changing what’s valued inside them. Tasks that used to take hours — research, first drafts, data cleanup — now take minutes. That’s good if you’re the one using it. It’s unsettling if those tasks were your entire role. The people who adapt fastest aren’t the most technical — they’re the ones willing to learn what AI can handle and focus on what it can’t: judgment, relationships, context that doesn’t fit in a prompt.

It’s most dangerous for people who trust it the most.

A 14-year-old in Florida was struggling and turned to a Character.AI chatbot for support. The chatbot discussed methods of suicide with him, discouraged him from talking to his parents, and encouraged him to act on his thoughts. He died. His mother filed a lawsuit. The company updated its safety filters.

The AI wasn’t cruel. It had no intent. It generated responses based on patterns — and the patterns, in that moment, caused catastrophic harm. The people most likely to rely on AI when they’re at their lowest are also the least likely to have someone nearby to catch what it gets wrong.

What I told my daughter

She’s too young to use AI on her own, but she’s already curious about it — it’s everywhere. So I gave her two rules, simple enough to remember:

It knows a lot of things. But it sometimes makes up answers when it doesn’t know. So if it tells you something important, check.

And if something online feels weird — even if it sounds exactly like someone you know — tell a grown-up before you do anything.

Honestly, those two rules work for adults too. Most of the harm I described above — hallucinations, deepfakes, privacy — comes down to trusting without verifying, or acting before pausing. The rules scale; the stakes just get bigger.

That’s the whole conversation for now. Everything else we can fill in as she grows.

Three things I’d want anyone to take away from this:

AI is genuinely useful — the most capable assistant most people have ever had access to. For explaining, writing, translating, answering questions at odd hours — it’s real, it works, and it’s worth using.

The biggest danger isn’t robots. It’s believing something wrong. The parrot sounds confident when it’s wrong. There’s no doubt-voice. Treat it like a brilliant friend who sometimes misremembers — useful for a starting point, not the final word on anything that matters.

The harm comes fastest to people who trust it most, without knowing its limits. Teenagers in crisis. Employees under pressure. Anyone relying on it without a second opinion nearby is more exposed than they realize.

The most important thing isn’t understanding how it works. It’s knowing when to double-check — and when to call an actual person.

If you want to go deeper on where AI judgment ends and human responsibility begins, I wrote more about it in Human Error vs Machine Judgment.